Researchers at the University of Minnesota Twin Cities have taken the wraps off a hardware device that could revolutionize artificial intelligence (AI) computing.

The team claims that this device, dubbed Computational Random-Access Memory (CRAM), will address one of the most pressing challenges in the field by cutting energy consumption for AI applications by a factor of at least 1,000.

The International Energy Agency (IEA) recently projected that energy consumption for AI is set to more than double from 460 terawatt-hours (TWh) in 2022 to a staggering 1,000 TWh by 2026— roughly equivalent to Japan’s entire electricity usage.

“This work is the first experimental demonstration of CRAM, where the data can be processed entirely within the memory array without the need to leave the grid where a computer stores information,” explained Yang Lv, a postdoctoral researcher in the university’s Department of Electrical and Computer Engineering and lead author of the research.

Traditional AI methods involve transferring data between logic units (where information is processed) and memory (where it’s stored), consuming substantial power. CRAM, however, eliminates the need for these energy-intensive transfers by keeping the data within the memory.

A payoff worth two decades

The researchers estimate that a CRAM-based machine learning accelerator could achieve energy savings of up to 2,500 times compared to conventional methods.

This immense breakthrough didn’t happen overnight but is the result of over 20 years of research spearheaded by Jian-Ping Wang, a Distinguished McKnight Professor and Robert F. Hartmann Chair in the Department of Electrical and Computer Engineering.

“Our initial concept to use memory cells directly for computing 20 years ago was considered crazy,” reflected Wang in a press release. “With an evolving group of students since 2003 and a true interdisciplinary faculty team built at the University of Minnesota— from physics, materials science and engineering, computer science and engineering, to modeling and benchmarking, and hardware creation— we were able to obtain positive results and now have demonstrated that this kind of technology is feasible and is ready to be incorporated into technology.”

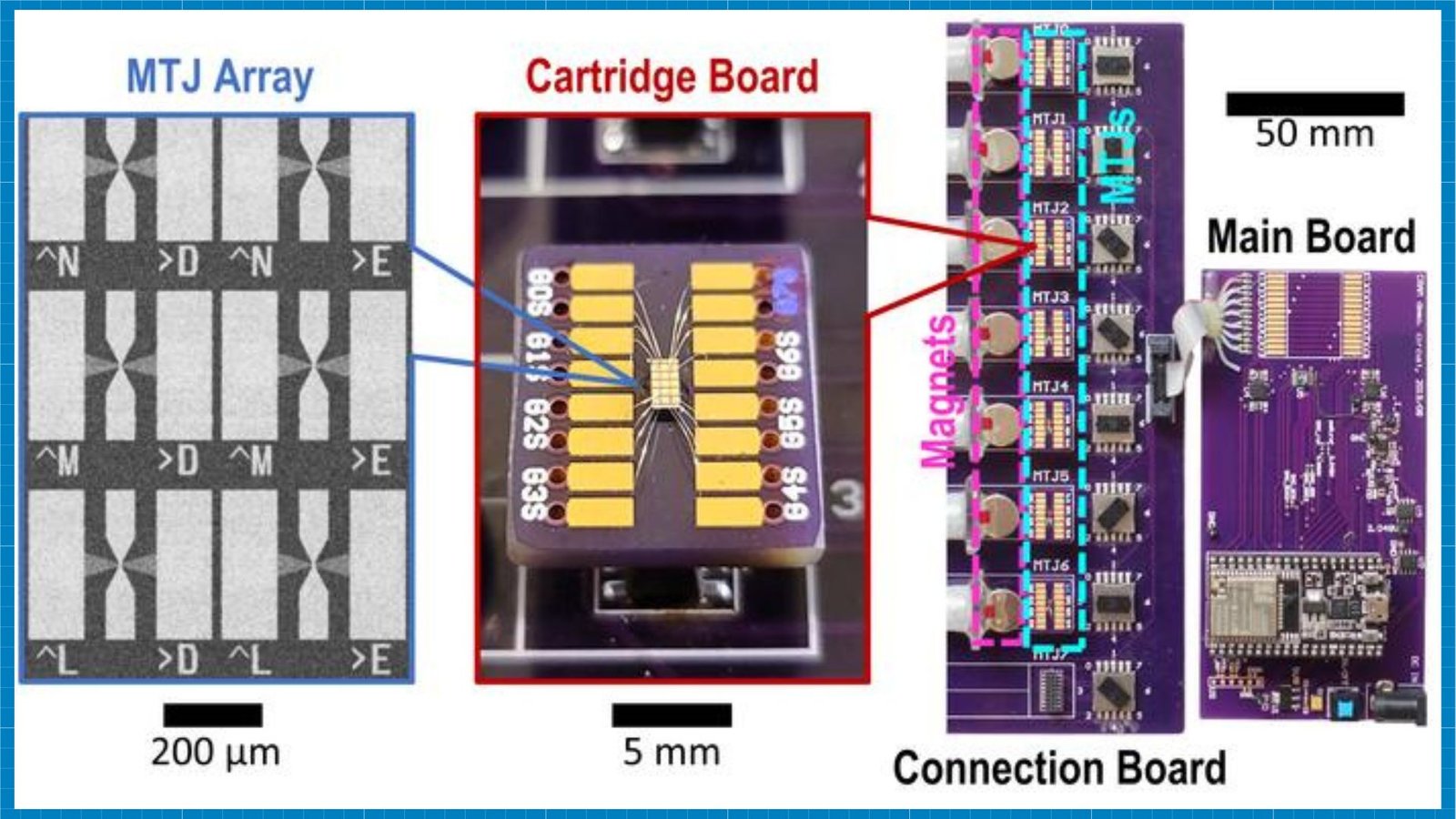

The CRAM architecture is built on the team’s earlier work with Magnetic Tunnel Junctions (MTJs) and nanostructured devices. These have already found applications in hard drives, sensors, and other microelectronics systems. MTJs form the basis of Magnetic Random Access Memory (MRAM), which has been implemented in microcontrollers and smartwatches.

Reimagining computer architecture for AI

CRAM is a fundamental shift away from the traditional von Neumann architecture, which has been the basis for most modern computers. Enabling computation directly within memory cells eliminates the computation-memory bottleneck that has long plagued computer design.

“As an extremely energy-efficient digital based in-memory computing substrate, CRAM is very flexible in that computation can be performed in any location in the memory array, “ Ulya Karpuzcu, an Associate Professor in the Department of Electrical and Computer Engineering and co-author of the paper, highlighted.

“Accordingly, we can reconfigure CRAM to best match the performance needs of a diverse set of AI algorithms.”

The technology leverages spintronic devices, which use the spin of electrons rather than their electrical charge to store data. This approach offers significant advantages over traditional transistor-based chips, including higher speed, lower energy consumption, and resilience to harsh environments.

“It is more energy-efficient than traditional building blocks for today’s AI systems,” Karpuzcu added. “CRAM performs computations directly within memory cells, utilizing the array structure efficiently, which eliminates the need for slow and energy-intensive data transfers.”

Having already secured multiple patents, the research team is now looking to collaborate with semiconductor industry leaders, including those in Minnesota, to scale up their demonstrations and produce hardware that can advance AI functionality.

Details of the team’s research were published in the peer-reviewed journal npj Unconventional Computing.

ABOUT THE EDITOR

Amal Jos Chacko Amal writes code on a typical business day and dreams of clicking pictures of cool buildings and reading a book curled by the fire. He loves anything tech, consumer electronics, photography, cars, chess, football, and F1.